The Lesson

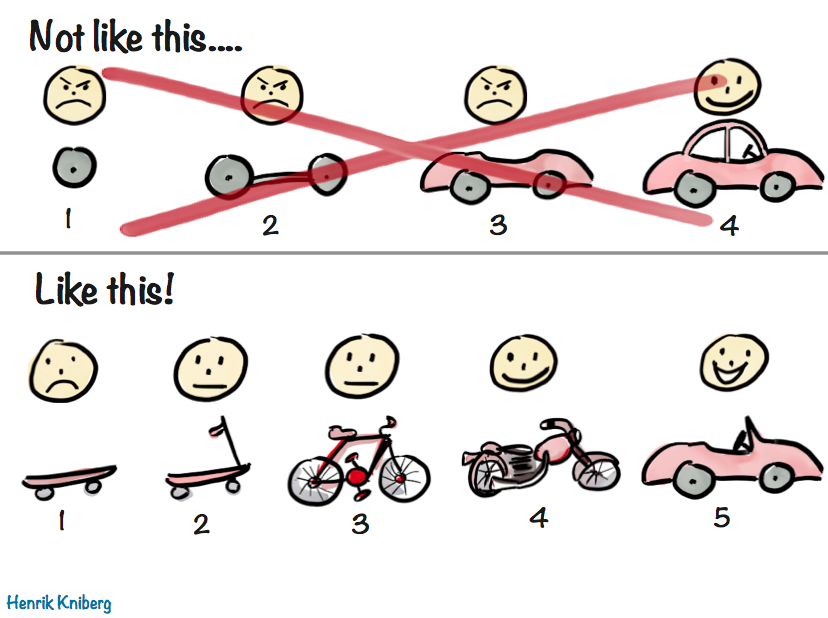

The Minimum Viable Product (MVP) is a concept from Lean Startup methodology. Eric Ries defines it as:

"The version of a new product which allows a team to collect the maximum amount of validated learning about customers with the least effort."

At its core, MVP is about getting a working product into users' hands quickly so you can learn what actually matters. In analytics reporting, this approach helps ensure solutions stay aligned with stakeholder needs and deliver value early, rather than after long development cycles.

Why This Matters in Analytics

In analytics projects, we often spend significant time gathering requirements and trying to fully understand stakeholder needs before building anything. Techniques like the Five Whys can help clarify the underlying problem, but many reporting processes are still largely linear: gather requirements, build a dashboard, deliver it, and move on.

The challenge is that stakeholders frequently don't know exactly what they need until they see real data in a working tool. A static mock-up or a late-stage review rarely surfaces the kinds of feedback that emerge from real usage.

An MVP approach changes this dynamic. By delivering a working version early and iterating, analysts can incorporate user feedback, improve usability, and refine metrics in ways that meaningfully increase adoption and long-term value. Early engagement also naturally incorporates user training, bug identification, and ongoing stakeholder involvement -- factors that significantly improve the chances that reporting is actually used.

My Experience

Earlier in my analytics career, I worked on a reporting project focused on identifying orders, sellers, and customers with a high proportion of $0 revenue units. Multiple stakeholders were interested in understanding the drivers behind these transactions.

I followed the process I was used to: meetings to gather requirements, designing dashboards for different stakeholder needs, creating a mock-up, and iterating on small changes. Eventually, I delivered a full set of dashboards -- eight in total.

At first, two of the dashboards saw some use. The remaining six were rarely touched, and over time even the commonly used ones fell out of use as priorities shifted.

Looking back, the issue wasn't the technical work or the intent behind the reporting. The problem was the process. We built a complete solution before validating which parts of it actually mattered.

If we had started with a small MVP, we likely would have discovered much earlier that only a subset of the dashboards were necessary. Stakeholders also would have had more opportunity to ask follow-up questions, refine metrics, and shape the next phase of reporting based on real usage.

The Breakdown

At the time, I believed the analytics workflow was straightforward: gather requirements, build the solution, deliver it, and move on. That was the process my team followed, and I didn't yet have the experience to question it.

What was missing was an iterative mindset -- the same mindset used in product and software development. Frameworks like MVP and Build-Measure-Learn emphasize delivering value early, observing how users interact with the product, and improving based on real feedback rather than assumptions.

Without that loop, it's easy to overbuild, misjudge priorities, or deliver tools that technically meet requirements but don't sustain long-term business value.

How I Think About It Now

Today, I approach reporting projects with iteration in mind from the beginning. My goal is to identify the smallest version of a report or dashboard that delivers meaningful value and get that version into stakeholders' hands as quickly as possible.

From there, reporting becomes a living product rather than a one-time deliverable. Metrics can be refined, features added intentionally, and low-value components avoided altogether. Equally important, this approach builds stakeholder engagement early, which is often the difference between a dashboard that is used and one that quietly fades away.

Practical Takeaways

- Identify the core questions or decisions the reporting must support before designing full solutions.

- Build a minimum viable version and get it into users' hands quickly.

- Use early releases to gather feedback on usability, metrics, and workflow -- not just correctness.

- Incorporate user training and engagement as part of the rollout, not as an afterthought.

- Treat reporting as an iterative product that evolves or is retired based on business value.

Looking Ahead

In a future post, I'll talk about a technique that pairs naturally with MVP thinking: the Five Whys. It's one of the most effective ways I've found to move past surface-level requests and uncover the real business problem that reporting needs to solve.